Here is a scenario you will recognise immediately. A competitor suffers a high-profile data breach. The story runs across LinkedIn for two weeks. Three executives appear before a parliamentary committee. A month later, your leadership team sits down for its quarterly review. Within an hour, everyone agrees: the cybersecurity budget should triple. Nobody has pulled up a risk analysis for your own infrastructure. Nobody has asked whether the actual threat to your organisation has materially changed.

Meanwhile, a burnout crisis has been spreading quietly through the organisation for two years. The absenteeism data is there. The cost, conservatively estimated, is ten times higher than any realistic data breach scenario. But nobody talks about it. It is slow, it is invisible, and it lacks the dramatic charge of a cyberattack on the evening news.

This is the availability heuristic at work. And it is not an occasional glitch, it is the brain’s default mode under time pressure.

The availability heuristic is the tendency to judge the probability of an event based on how easily examples come to mind. Recent, vivid, and emotionally charged information dominates your sense of risk, even when the statistical reality tells a very different story. At work, this produces systematically distorted decisions about risk, strategy, and innovation. How it operates, and how to counter it structurally, is what this article is about. For the broader framework: see the SUE Influence Framework.

What is the availability heuristic?

In 1973, Amos Tversky and Daniel Kahneman published one of the most cited papers in the history of psychology. They showed that when people estimate frequency or probability, they do not calculate, they fall back on a simple rule of thumb: how easily can I think of an example?[1]

They demonstrated this with an elegant experiment. They asked participants: are there more words in the English language that begin with the letter K, or words in which K is the third letter? Most people say: words that begin with K. The answer is wrong. There are far more words where K is the third letter (think, make, bike, like). But words starting with K come to mind much faster. And that speed of retrieval is what the brain translates directly into “more frequent” and “more probable”.

This is System 1 in action: fast, automatic, and completely below the threshold of conscious awareness. The brain substitutes the hard question “how likely is this?” with the much easier question: “how quickly can I think of an example?” And that substitution does not feel like a shortcut. It feels like thinking.

Three dimensions determine how available something is in memory:

Recency. What happened yesterday carries more weight than what you read a year ago. The brain grants fresh memories disproportionate influence, even if they are less representative.

Vividness. A story with concrete details, names, and emotion is easier to recall than an abstract statistic. A colleague describing her burnout in personal terms is more available than the line “27% of employees experience work-related stress” buried in an HR report.

Emotional intensity. Fear, outrage, and shock make memories stickier. A plane crash that leads the news feels more dangerous than driving, even though the statistical reality is precisely the opposite. After 9/11, air travel plummeted while road fatalities rose, because people switched to cars. Gut feeling beat the numbers, with fatal consequences.[2] The same mechanism runs through every leadership meeting where a vivid story overrides the aggregate data.

Your brain does not measure probabilities. It measures how quickly a memory surfaced. And that feels indistinguishable from facts.

Three scenarios where it does the most damage

Risk assessment after a vivid event

A mid-sized technology company hires a new sales director. Three months in, it emerges that he has been inflating pipeline numbers and damaging client relationships. The fallout is significant. The leadership team is shaken. The next time they recruit for a senior sales role, they add five new screening steps, each one aimed precisely at the signals that were visible in this one case: specific LinkedIn patterns, references from former employers of his own choosing, an additional psychometric assessment.

The problem is that they have now armoured themselves against the previous threat. The genuinely relevant risks in the next candidate, perhaps a tendency to overpromise, or weak team leadership skills, remain invisible. They are not vivid. They carry no emotional charge. So they are systematically underestimated.

This is the classic availability heuristic pattern in hiring and risk management: you build your defences around the most recent blow, not around a statistical analysis of the most common risks. It generates the feeling of rigour while creating a selective blindness to anything that does not resemble the last incident.

Strategy dominated by the last complaint

Your head of customer experience walks into a quarterly review carrying NPS data, churn analyses, and a heatmap of customer contact moments. Ten minutes before the meeting, she spoke in the corridor with a furious client who had just cancelled his account following a billing error. She opens the meeting. Which topic dominates the first forty minutes?

The billing problem. Not the structural churn patterns in the data. Not the contact moments that consistently underperform. The one vivid client, with his specific words and his visible frustration, is more available than a thousand anonymous data points in a chart.

I see this pattern in virtually every organisation I work with. Customer strategy is not driven by aggregate data but by the most recent, most vivid interaction. The loudest customer gets the most attention. The silent majority who quietly churn produces no dramatic story, so it stays invisible.

The result is a roadmap that reacts to outliers rather than patterns, and a team that always feels busy but cannot understand why customer satisfaction stubbornly refuses to improve.

Innovation killed by a three-year-old memory

Your team has developed a new product concept. The research is solid. The business case is coherent. In the review session, a senior leader says: “This reminds me of Project Phoenix from three years ago. Everyone was excited about that one. It was a complete failure.”

The energy in the room shifts. Project Phoenix was indeed a painful episode. Everyone remembers it. The questions become more cautious, more sceptical. The concept does not make it to the next stage.

But nobody asked: how comparable are the circumstances, really? Has the market moved? Are the internal capabilities different now? Was Phoenix’s failure driven by the same factors at play today? The memory of Phoenix is vivid, emotionally loaded, and easily accessible. It has, in effect, replaced the risk analysis.

This is how organisations learn to avoid rather than to learn. Earlier failures are not systematically analysed for their specific causes. They become memory traces that colour future decisions. And because the memory of failure is more readily available than the statistical probability of success in a new attempt, fear wins structurally over analysis.

Why the availability heuristic is so hard to fight: an IF analysis

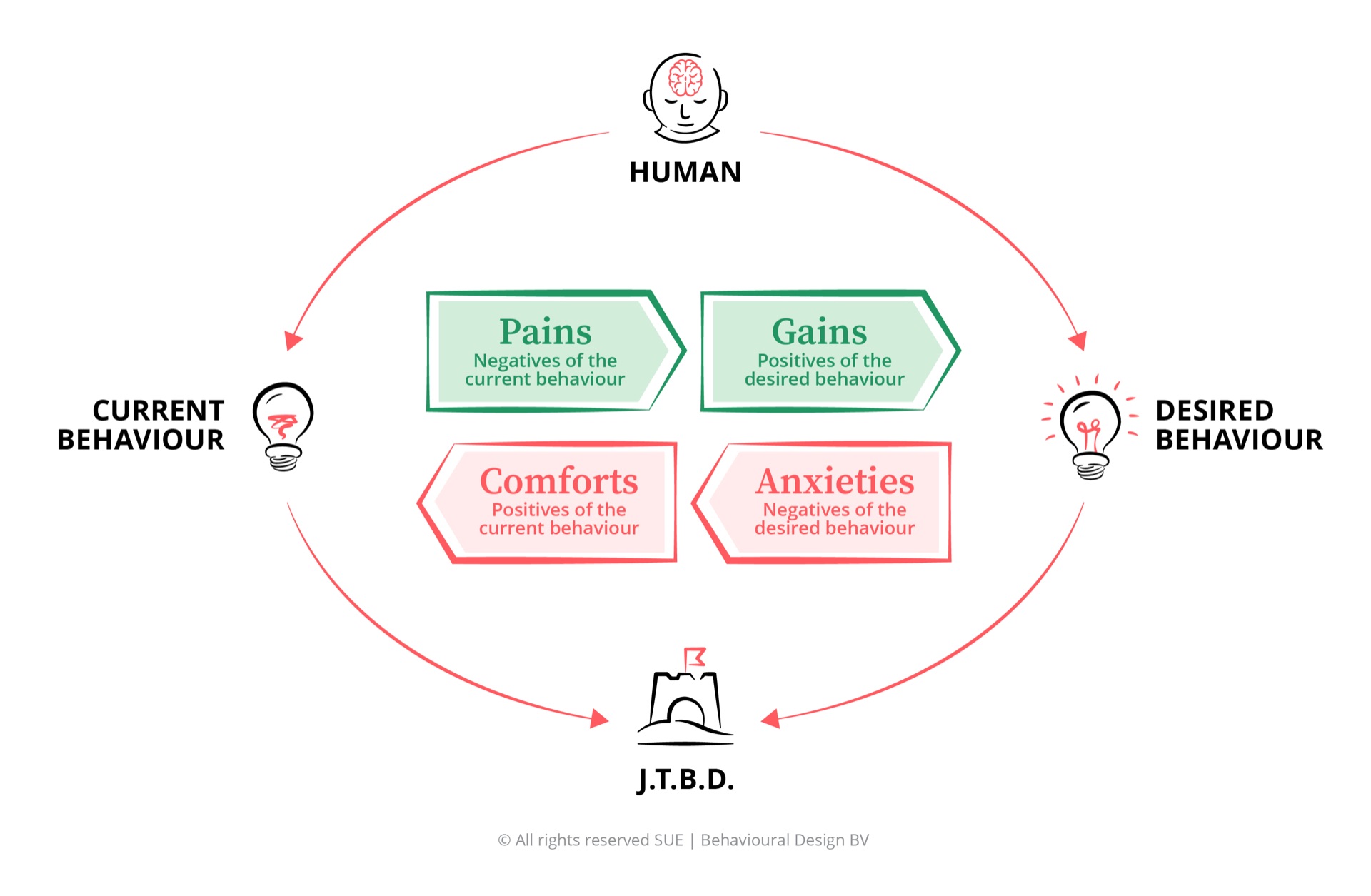

When you analyse the availability heuristic through the SUE Influence Framework, you understand why awareness alone does not work. The heuristic is not a thinking error you can train away. It is a Comfort, deeply rooted in how the brain manages cognitive energy.

The Comforts are the core of it. Trusting what comes to mind easily is cognitively efficient. The brain consumes less energy when it does not need to search for base rates, historical frequencies, and statistical relationships. Using a vivid memory as a proxy for probability does not feel like laziness. It feels like experience, like instinct, like professional judgement. That comfort is powerful.

The Anxieties amplify this. When you challenge the vivid memory, you are implicitly challenging the judgement of the person who raised it. That creates social tension. In a meeting, asking “but do the base rates actually support this?” can feel like dismissing someone’s expertise. So most people do not ask it.

The Pains of the availability heuristic are real but delayed: distorted risk assessments that only become visible months later as failed projects or missed opportunities. The Gains of better decision-making are abstract and future-oriented. Compared with the immediate comfort of a vivid memory steering the room, they lose every time.

This is exactly the same pattern that appears in how media coverage distorts risk perception. Slovic, Fischhoff, and Lichtenstein showed as early as 1982 that public risk perception correlates far more strongly with media volume than with actual mortality rates. Tornadoes are overestimated. Diabetes is underestimated. Not because people are irrational, but because the brain uses availability as a proxy for danger.[2] In the workplace, the same mechanism operates, except the “media” is the last all-hands meeting, or the most recent customer call.

Five interventions that actually work

1. Make base rates mandatory before any risk discussion. Before a team begins a risk analysis following an incident, the first question on the agenda is always: what is the historical base rate of this type of risk in organisations like ours? That forces the conversation from the vivid anecdote toward the statistical reality. Simple to implement as a standing agenda item, powerful in its effect.

2. Maintain systematic risk registers. A risk register updated at fixed intervals decouples the risk agenda from the most recent dramatic event. It makes slow, invisible risks visible alongside the acute, vivid ones. Burnout sits on the register with its real cost figures, next to cybersecurity with its real frequency data. What is on the register gets discussed. What is not, gets ignored.

3. Use decision journals. Ask managers to briefly document significant decisions: what triggered the discussion, what information was on the table, which alternatives were considered. Reviewing these six months later reveals how often recent incidents set the agenda without the underlying analysis justifying it. This builds institutional memory and makes the pattern visible rather than leaving it invisible.

4. Structurally diversify information sources. Ensure that strategy meetings are not fed solely by the information that happens to be available in the room, but by a fixed set of sources: customer data covering at least one full quarter, sector-wide benchmark research, internal trends over time. When the structure determines which information reaches the table, the availability heuristic has less room to operate.

5. Build cooling-off periods after dramatic events. Establish an organisational norm that major budget reshuffles or policy changes in response to an incident can only be finalised after a mandatory waiting period of two to four weeks. During that window, a formal analysis based on base rates and historical data is required. This is not bureaucracy. This is structurally reducing the influence of emotional recency on strategic decisions.

How the availability heuristic connects to other biases

The availability heuristic rarely operates alone. At work, it combines with other cognitive shortcuts in ways that make it harder to diagnose and harder to counter.

Confirmation bias is the most common amplifier. Once a recent event has skewed your risk perception, confirmation bias filters all incoming information through that distorted lens. You notice signals that confirm the threat and discount signals that relativise it. The availability heuristic creates the initial distortion; confirmation bias consolidates it.

Anchoring bias locks in alongside it: the first vivid number or incident that lands on the table serves as an anchor for all subsequent estimates. If someone opens with “the breach at our competitor cost them four million,” that figure anchors the conversation about your own risk budget, regardless of whether the comparison is actually relevant.

Optimism bias works in an interesting counterpoint. For risks that feel distant and produce no vivid examples, people tend toward underestimation. The availability heuristic causes overestimation of vivid risks; optimism bias causes underestimation of abstract ones. Together they create a distorted risk landscape where visible dangers receive too much attention and real dangers too little.

The framing effect amplifies everything: how a risk is presented, as a potential loss or a potential gain, as an isolated incident or a systemic pattern, partly determines how available the associated examples become. A well-framed incident dominates the agenda; a poorly framed structural problem disappears from view.

Frequently asked questions

What exactly is the availability heuristic?

The availability heuristic is a mental shortcut in which you estimate the likelihood of an event based on how easily an example comes to mind. Because recent, vivid, or emotionally charged experiences come to mind most readily, you systematically overestimate how often or how likely they are. Tversky and Kahneman first described this mechanism in 1973, laying the groundwork for modern behavioural economics.

Why does the availability heuristic distort risk assessment so strongly?

Risk assessment requires abstract probability calculation, something System 1 is genuinely poor at. Instead, the brain substitutes the hard question “how likely is this?” with the easy question “how quickly can I think of an example?”. Dramatic events, a data breach, a plane crash, a corporate scandal, dominate memory not because they are frequent, but because they are vivid and emotionally loaded. That vividness gets misread as frequency.

How does the availability heuristic differ from confirmation bias?

Confirmation bias is about filtering information to confirm existing beliefs. The availability heuristic is about estimating probability based on what comes to mind most easily. They reinforce each other: once a vivid event has skewed your risk perception, confirmation bias then filters new information to reinforce that distorted picture. In practice, they operate as a pair.

Can training reduce the availability heuristic?

Training raises awareness but does not eliminate the heuristic. The brain continues using System 1 for quick judgements, even when you know the bias exists. The most effective approach is redesigning decision-making processes: making base rates mandatory, maintaining systematic risk registers, and building cooling-off periods into the process after dramatic events before budgets or policies are finalised.

How can I spot the availability heuristic in meetings?

Watch for three signals: the discussion is dominated by a recent incident rather than structural data, someone opens with “I remember clearly when…” as justification for a strategic decision, or the most recent complaint or success is treated as if it represents the whole. When you spot any of these patterns, ask for base rates and aggregate data before moving forward.

Conclusion

The availability heuristic may be the most underestimated cognitive bias in organisational life, precisely because it is so invisible. A dramatic incident feels like relevant evidence. A vivid memory feels like expertise. A recent complaint feels like a pattern. All of them are surrogates for actual analysis. The problem is not that people are irrational. The problem is that System 1 delivers these surrogates without announcing itself.

Want to learn how to structurally improve decision-making in your organisation? In the Behavioural Design Fundamentals Course, you learn to apply the Influence Framework and the SWAC Tool to diagnose cognitive biases and redesign the environments in which decisions are made. Rated 9.7 by 5,000+ alumni from 45 countries. Based on the methodology behind The Art of Designing Behaviour.

PS

At SUE our mission is to use the superpower of behavioural psychology to help people make better choices, for themselves and for others. The availability heuristic is particularly insidious because it confuses leadership with intuition, and intuition with anecdote. The first step is not to trust intuition less, that is unrealistic. The first step is to design environments in which intuition is fed by statistical reality rather than by the most recent dramatic event. That is Behavioural Design at its core: you cannot reprogram the brain, but you can shape the context so that the brain arrives at better decisions.