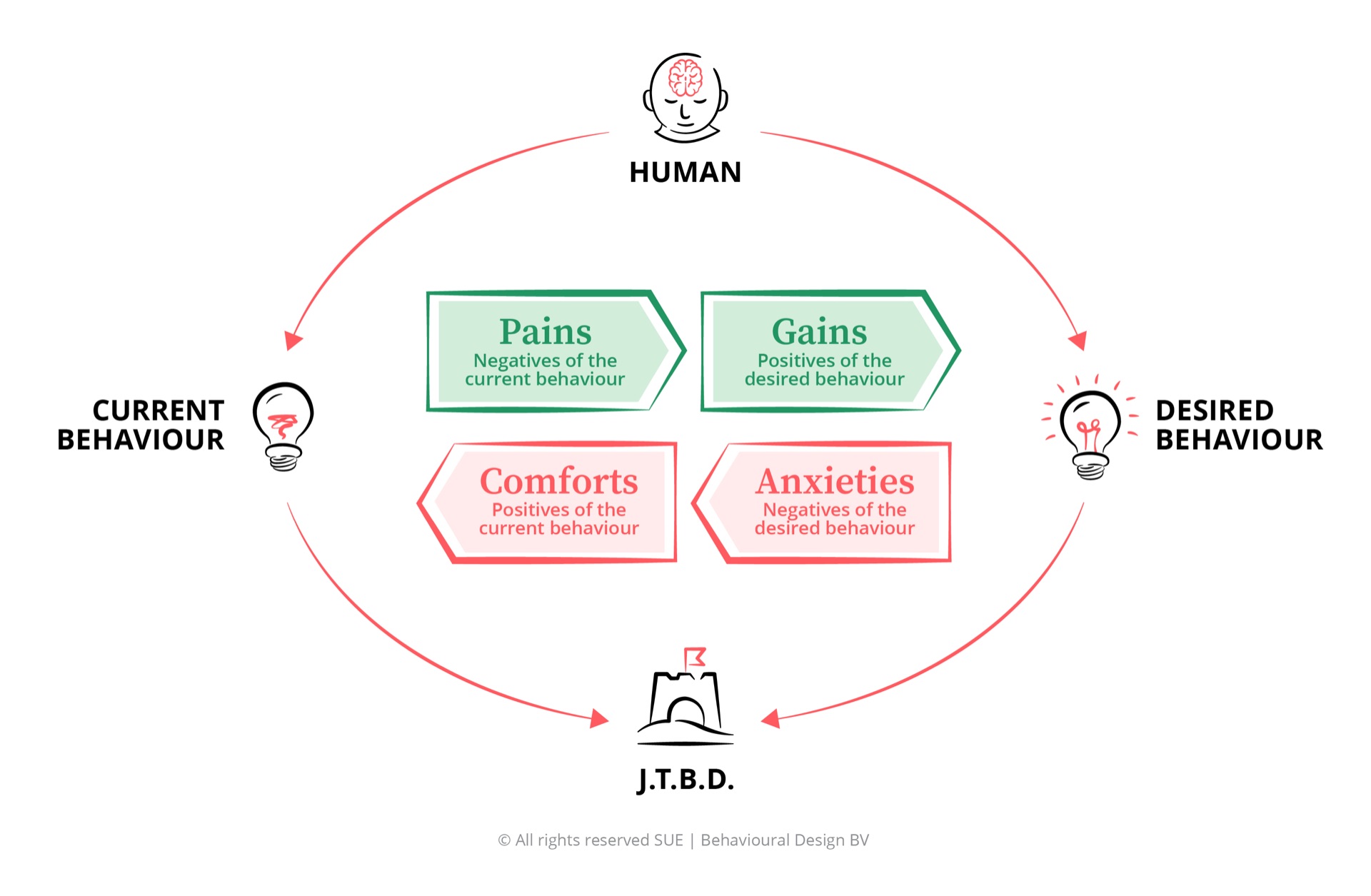

Cognitive biases at work are systematic patterns of deviation from rational judgement that operate automatically below conscious awareness. They are not rare lapses in thinking - they are the default mode of human cognition. At work, they distort hiring decisions, strategic planning, performance assessments, innovation pipelines, and team dynamics in predictable, measurable ways. A cognitive bias arises when the brain uses mental shortcuts (heuristics) to process information quickly. These shortcuts are efficient - necessary, even - but they introduce systematic errors that cannot be corrected by awareness or willpower alone. The solution is not to train people to think differently. It is to redesign the environment in which decisions are made - the core principle of behavioural design as described in Astrid Groenewegen’s The Art of Designing Behaviour (2024).

What is a cognitive bias?

A cognitive bias is a systematic pattern of deviation from rationality in judgement. The key word is systematic. A bias is not a random error - it is a predictable, consistent distortion that follows the same pattern across people, contexts, and decisions. This predictability is what makes biases both so consequential and, in principle, so designable-around.

Biases arise because the brain must process an enormous volume of information with limited cognitive resources. The solution evolution found was heuristics: mental shortcuts that allow fast, good-enough decisions without exhausting conscious processing capacity. In most everyday situations, these shortcuts work well. In high-stakes organisational decisions - who to hire, which strategy to pursue, how to evaluate performance, where to invest - they produce systematic errors.

Daniel Kahneman’s research, summarised in Thinking, Fast and Slow (2011), established the dual-process framework that explains why biases are so persistent. System 1 is fast, automatic, and emotional - it operates below conscious awareness and produces intuitive judgements in milliseconds. System 2 is slow, deliberate, and analytical - it requires conscious effort and is used for complex reasoning. Cognitive biases are System 1 phenomena. They are generated before System 2 has a chance to evaluate them. By the time we are consciously aware of a judgement, the bias has already shaped it.

Awareness of a bias does not protect you from it. You cannot think your way out of a System 1 process using System 2 tools.

This is the central insight that most corporate training programmes on cognitive bias miss. “Unconscious bias training” is a System 2 intervention applied to a System 1 problem. It produces awareness, which is real and valuable. It does not produce behaviour change. For that, you need to redesign the environment - the defaults, the processes, the moments - in which decisions are made.

Four categories of bias at work

Cognitive biases do not operate randomly. They cluster around specific cognitive functions and therefore tend to appear in predictable organisational contexts. Understanding which category a bias belongs to tells you where to look for it - and where to intervene.

Decision-making biases

These biases distort the process of choosing between options. They include loss aversion (the fear of losing outweighs equivalent gains), the sunk cost fallacy (past investment drives future decisions rather than future value), anchoring (the first number seen disproportionately shapes all subsequent judgements), and the availability heuristic (recent or vivid events are overweighted in probability assessments). Decision-making biases are most costly in strategy, investment allocation, and project continuation decisions.

Social and interpersonal biases

These biases distort how we evaluate and respond to other people. They include confirmation bias (we seek information that confirms existing beliefs about people), affinity bias (we favour people similar to ourselves), the halo effect (a positive impression in one area influences evaluation across all areas), and attribution bias (we explain our own failures situationally but others’ failures dispositionally). Social biases are most costly in hiring, performance management, and team composition.

Self-assessment biases

These biases distort how we evaluate our own competence and judgement. The Dunning-Kruger effect (people with limited knowledge overestimate their competence) and overconfidence bias (we systematically overestimate the accuracy of our own predictions) are the most consequential at work. They appear in project planning, risk assessment, and the evaluation of novel ideas - especially in senior leadership where feedback mechanisms are weakest.

Information-processing biases

These biases distort how we gather, weight, and remember information. Framing effects (identical information presented differently produces different decisions), the recency effect (recent information is overweighted), and the clustering illusion (we see patterns in random data) distort strategic analysis, performance reviews, and market assessment. They are particularly dangerous because they affect the quality of the raw material - the information - before any decision is made.

How cognitive biases damage organisations

The organisational cost of unmanaged cognitive biases is not primarily visible in individual bad decisions - it accumulates as systemic underperformance across four domains.

Hiring and talent: Research consistently shows that unstructured interviews are dominated by social biases - affinity bias, halo effect, confirmation bias. The result is homogeneous teams that replicate existing thinking patterns rather than building cognitive diversity. The first impression formed in the opening minutes of an interview determines the outcome in the majority of cases. Everything after that is confirmation-seeking.

Strategy and investment: The sunk cost fallacy keeps failing projects alive long past their economic justification. Loss aversion makes organisations systematically too cautious in novel market opportunities. Overconfidence in forecasting produces project timelines and financial projections that are reliably optimistic. These are not isolated errors - they are structural features of how strategy is made.

Performance management: Performance reviews are rich environments for bias. Recency effects mean that performance from the last month outweighs the preceding eleven. The halo effect means that one visible success or failure colours the entire assessment. Attribution bias means that managers systematically explain their own teams’ failures as contextual and competitors’ successes as situational. The result is evaluations that measure perceived performance rather than actual performance.

Innovation: Confirmation bias is the primary killer of genuinely novel ideas in organisations. When new evidence conflicts with existing beliefs, it is systematically discounted. Status quo bias (the preference for the current state over alternatives) means that the default option in any decision consistently outperforms its actual merits. Organisations are structurally conservative not because their people lack ambition, but because the cognitive architecture of their decision-making systematically favours continuity over change.

What actually works: designing around bias

The standard organisational response to cognitive bias - awareness training - addresses a real problem with an insufficient solution. Awareness is the necessary first step. It is not the destination. The research on debiasing interventions consistently shows that awareness without structural change produces limited lasting effect on actual decisions.

What works is redesigning the environment in which decisions are made. This is the principle of behavioural design applied to organisations: change the context, not the person. Here is what that looks like in practice.

-

Structured decision processes over intuition

Unstructured decisions are bias-rich environments. Structured decisions - with predefined criteria evaluated in a fixed sequence before any overall judgement is formed - dramatically reduce the impact of social and self-assessment biases. In hiring, this means structured interviews with scored criteria applied before any holistic candidate evaluation. In investment decisions, it means pre-commitment to evaluation criteria before seeing proposals. The structure is not bureaucracy - it is the environment within which System 2 can operate.

-

Pre-mortems before major decisions

A pre-mortem is a structured exercise in which the decision team imagines that the project or decision has already failed - and then works backward to identify the most plausible causes. This simple reframe neutralises overconfidence bias and loss aversion by making failure thinkable before it is irreversible. Research by Gary Klein shows that pre-mortems increase the identification of decision risks by up to 30%. They take ninety minutes. The sunk cost they prevent can take years to recognise.

-

Cognitive diversity in decision-making bodies

Homogeneous teams produce confirmation bias at the group level. When everyone has a similar background, similar experience, and similar mental models, the group amplifies individual biases rather than correcting them. Building cognitive diversity - different disciplinary backgrounds, different decision-making styles, different life experiences - into the composition of key decision-making bodies is the most durable structural protection against groupthink and confirmation bias at scale.

-

Devil’s advocate roles and structured dissent

The social cost of disagreeing with a dominant view in a meeting is high. Status quo bias and social conformity biases systematically suppress dissenting perspectives. Institutionalising the role of devil’s advocate - assigning someone the explicit responsibility to challenge the emerging consensus - removes the social cost of disagreement by making it an expected part of the process. The dissent becomes the role, not the person.

-

Default redesign for recurring decisions

Many organisational decisions are not one-off judgements but recurring choices made in the same context each time. Performance review formats, job posting templates, investment approval processes, meeting agendas - these are defaults that shape thousands of decisions. Redesigning defaults to embed debiasing mechanisms means that the protection operates automatically, without requiring individual effort or awareness each time. This is the highest-leverage intervention: change the default once, and every subsequent decision is better protected.

Explore individual biases at work

Below are detailed analyses of specific cognitive biases in workplace contexts. Each article covers the full Influence Framework diagnosis, three workplace scenarios, five behavioural design interventions, and a FAQ section.

Confirmation Bias at Work

Why your team always agrees with you, and what it costs your hiring, strategy, and team decisions.

Read the full analysis → HR & Organisational BehaviourCognitive Dissonance at Work

Why employees keep defending bad decisions, and how to design an environment where course-correcting doesn't cost face.

Read the full analysis → HR & Organisational BehaviourDunning-Kruger Effect at Work

How overconfidence in competence distorts hiring assessments, performance conversations, and decisions at every level.

Read the full analysis → HR & Organisational BehaviourSunk Cost Fallacy at Work

Why organisations keep investing in failing projects, and how to design exit criteria stronger than past investment.

Read the full analysis → HR & Organisational BehaviourLoss Aversion at Work

Why organisations are systematically too risk-averse, and how the fear of loss undermines strategic decisions.

Read the full analysis → HR & Organisational BehaviourHalo Effect at Work

Why one positive trait colours your entire judgement of a candidate, colleague, or idea.

Read the full analysis → HR & Organisational BehaviourSocial Proof at Work

How colleagues' behaviour steers your choices, from meeting dynamics to willingness to innovate.

Read the full analysis → HR & Organisational BehaviourAnchoring Bias at Work

How the first number in any negotiation, salary discussion, or project estimate shapes every subsequent judgement.

Read the full analysis → HR & Organisational BehaviourFraming Effect at Work

Why the same information leads to opposite decisions, depending on how you present it.

Read the full analysis → HR & Organisational BehaviourStatus Quo Bias at Work

Why teams and organisations cling to existing processes, even when better alternatives are available.

Read the full analysis → HR & Organisational BehaviourAvailability Heuristic at Work

Why recent or vivid events distort your risk assessment and priorities at work.

Read the full analysis → HR & Organisational BehaviourBandwagon Effect at Work

Why teams choose the same direction en masse, not because it's the best, but because everyone else is doing it.

Read the full analysis → HR & Organisational BehaviourDecision Fatigue at Work

Why the quality of your decisions deteriorates as the day progresses, and how to prevent it.

Read the full analysis → HR & Organisational BehaviourScarcity Principle at Work

Why limited availability increases perceived value and how that affects hiring, strategy, and team dynamics.

Read the full analysis → HR & Organisational BehaviourOptimism Bias at Work

Why project timelines are always too optimistic and how to design realistic estimates without killing motivation.

Read the full analysis → HR & Organisational BehaviourEndowment Effect at Work

Why people systematically overvalue their own ideas, projects, and processes the moment they feel ownership.

Read the full analysis → HR & Organisational BehaviourReciprocity Principle at Work

How the urge to return favours drives teams, negotiations, and collaborations, for better and worse.

Read the full analysis → HR & Organisational BehaviourPresent Bias at Work

Why short-term rewards beat long-term goals and how to design environments that protect future behaviour.

Read the full analysis → HR & Organisational BehaviourDecoy Effect at Work

How an irrelevant alternative systematically strengthens your preference for another option in every choice.

Read the full analysis → HR & Organisational BehaviourPeak-End Rule at Work

Why people judge experiences by the most intense moment and the ending, not the average.

Read the full analysis → BiasCurse of Knowledge at Work

The Curse of Knowledge makes experts unable to gauge what beginners know. Discover how this bias disrupts communication and collaboration.

Read the full analysis → BiasHindsight Bias at Work

Hindsight Bias makes us believe we knew it all along. Discover how this bias distorts evaluations and how to design interventions against it.

Read the full analysis → BiasNegativity Bias at Work

Negative experiences weigh heavier than positive ones. Discover how negativity bias drives decisions and how to reduce its impact.

Read the full analysis →Frequently Asked Questions

What is a cognitive bias?

A cognitive bias is a systematic pattern of deviation from rationality in judgement. It arises when the brain uses mental shortcuts (heuristics) to process information quickly. At work, cognitive biases operate invisibly in hiring decisions, strategic planning, performance assessments, and team dynamics. They are predictable and universal: every human brain exhibits them, regardless of intelligence, experience, or intention.

What is the difference between System 1 and System 2 thinking?

Daniel Kahneman’s System 1 and System 2 framework distinguishes two modes of cognition. System 1 is fast, automatic, and emotional - it operates below conscious awareness and produces intuitive judgements in milliseconds. System 2 is slow, deliberate, and analytical. Cognitive biases are System 1 phenomena. They operate automatically and cannot be switched off by willpower or awareness. This is why “unconscious bias training” produces limited behaviour change: awareness is a System 2 intervention applied to a System 1 problem.

Which cognitive biases have the most impact at work?

The cognitive biases with the greatest organisational impact are: confirmation bias (we seek information that confirms existing beliefs, making strategic blind spots invisible), the sunk cost fallacy (past investment drives future decisions), the Dunning-Kruger effect (people with limited knowledge overestimate their competence), loss aversion (the fear of losing outweighs equivalent gains), and anchoring bias (the first number encountered disproportionately shapes all subsequent judgements). These five biases appear across hiring, strategy, performance management, and innovation - making them the highest-priority targets for organisational debiasing.

Can cognitive biases be eliminated?

No. Cognitive biases cannot be eliminated because they are features of human cognition, not bugs. What can be done is to design systems, processes, and environments that reduce the impact of predictable biases at the moments where they cause the most harm. This is the core of behavioural design applied to organisations: not changing the people, but redesigning the context in which decisions are made.

What is the best way to reduce cognitive bias in hiring?

The most effective debiasing intervention in hiring is structured interviewing: pre-defined questions asked in a fixed sequence, with scored criteria evaluated independently before any overall candidate assessment. This reduces the impact of first impressions, affinity bias, and confirmation bias by forcing evaluation criteria to be applied before holistic judgements are formed. Work sample tests and blind CV screening provide additional structural protection. Training alone - without process redesign - produces minimal lasting effect on hiring outcomes.

PS

At SUE, we spend a significant amount of time helping organisations understand why their decisions look rational from the inside but produce systematically suboptimal outcomes. The answer is almost always the same: the decision-making process was designed for a rational actor and implemented by a human one. The gap between those two things is where cognitive bias lives. The good news is that once you know where to look, it is entirely possible to design processes that protect your organisation’s decisions at the level where they are actually made - System 1. If you want to learn how, the Fundamentals course is where I’d start. And if you want to read the theoretical foundation, Astrid’s The Art of Designing Behaviour (2024) is the place.