You know this moment. A strategic project has failed. The results are clear, the numbers speak for themselves. And then it begins. In the evaluation meeting, someone looks around the room and says: “I saw this coming.” A colleague nods: “Yes, the signs were there from the start.” Within ten minutes, the entire group agrees the failure was predictable. That the signals were obvious. That everyone sort of knew it already.

But nobody said it. Not before the decision. Not during the project. Only after the outcome was known did it suddenly become “obvious”.

This is hindsight bias at work. And it is one of the most dangerous cognitive errors in organisations, because it destroys the very thing you need most after a failure: the ability to honestly learn from what actually went wrong.

Hindsight bias is the tendency to believe, after learning an outcome, that you could have predicted it. In the workplace, it sabotages strategy reviews, project post-mortems and hiring decisions because failures are dismissed as “predictable” rather than investigated. The solution is not awareness but redesigning evaluation processes - pre-recorded predictions, structured post-mortems and the SUE Influence Framework.

What is hindsight bias?

Hindsight bias is what happens when your brain rewrites history. The moment you learn the outcome of an event, your memory automatically adjusts the past so that the outcome seems logical and predictable. You remember the warning signs vividly. You forget the noise, the ambiguous data, the perfectly good reasons that existed at the time for deciding differently. It is a classic System 1 process: fast, unconscious and extraordinarily convincing.[1]

Psychologist Baruch Fischhoff described the phenomenon in 1975 as “creeping determinism”: the creeping sense that the outcome was inevitable. In his experiments, he asked participants to estimate the probability of historical events. The group that already knew the outcome systematically rated the probability higher than the group that did not. Fischhoff called it the “I knew it would happen” effect.[2]

The bias operates along three dimensions. Your memory shifts. You genuinely believe your prediction was closer to the actual outcome than it was. Your sense of inevitability grows. You think it could not have played out differently. Your understanding of the outcome increases. You construct a clear narrative in retrospect that explains the outcome and forget how confusing the situation actually was at the time.

Neal Roese and Kathleen Vohs described in their 2012 review that hindsight bias is one of the most robust findings in decision psychology. It occurs in young and old, in experts and laypeople, in simple and complex decisions.[3]

The past always looks tidier than it was. Not because you saw things clearly. But because your brain imposes order on chaos after the fact.

Three scenarios where hindsight bias does the most damage

The strategy review where “everyone knew”

Take Nokia. In 2007, Nokia was the most valuable telecommunications company in the world. They held 50% market share in mobile phones. Virtually no analyst on the planet predicted their fall. Five years later, the company had all but vanished from the consumer market.

And yet, if you ask people today whether Nokia’s decline was predictable, almost everyone says yes. “They missed the smartphone revolution.” “They were too slow on touchscreens.” “You could see that coming, couldn’t you?”

No. Almost nobody saw it coming. The situation in 2007 was radically different from how we remember it now. The iPhone operating system was slow. There was no app store. Blackberry was the business standard. Nokia was investing millions in Symbian. The signals at the time were anything but clear.

This pattern plays out daily in boardrooms. A market offensive fails to deliver. An acquisition proves too expensive. A technology investment stalls. And then the retrospective narrative begins. “The warnings were there.” “The market data was clear.” But if the warnings were so clear, why did nobody act on them?

The answer is simple: because they were not clear at all. They only became so in hindsight.

The post-mortem that teaches nobody anything

At SUE, we regularly guide teams evaluating a failed project. The pattern is almost always the same. The project lead presents the timeline. The results are shown. And then the reconstruction begins.

Within fifteen minutes, the team has built a coherent story about why the project failed. Someone points to a meeting in month two where “the red flags were already visible”. Another remembers an email that “basically said it all”. The story fits. It feels logical. And it is largely fiction.

Not because people are lying. But because their memory rearranges the facts around the known outcome. The email that seems so telling in retrospect was at the time one of a hundred messages in an inbox. The “red flags” in that meeting were surrounded at the time by just as many green signals. But those green signals have vanished from the collective memory.

The consequence is devastating. The team does not learn from the actual causes of the failure, because it believes those causes were already known. Why dig deep when the explanation is staring you in the face? The post-mortem becomes a ritual that provides a feeling of control without producing genuine insight. And the next time around, the team makes precisely the same mistakes.

The hiring decision that was always wrong in hindsight

A manager hires someone. Six months later, the candidate turns out to be underperforming. And then the rewriting begins. “I actually had doubts during the interview.” “There was something about the way they answered.” “I felt it already, but I let myself be swayed by the rest of the team.”

Perhaps that was true. But probably not. The manager now selectively remembers the moments of doubt and forgets the much stronger moments of conviction. Memory adjusts to fit the known outcome. The reverse works equally well. When a hire turns out to be a success, the same manager remembers how they “knew straight away that it was the right call”.

This makes honest evaluation of your selection process nearly impossible. If every failure was predictable in hindsight, why change your interview method? And if every success confirms your ability to spot good people, why introduce structured interviews? Hindsight bias protects bad processes by presenting them as adequate ones after the fact.

Why awareness is not enough

You hear it often: “Now that I know what hindsight bias is, I can watch out for it.” It sounds logical. But it does not work. And the Influence Framework shows precisely why.

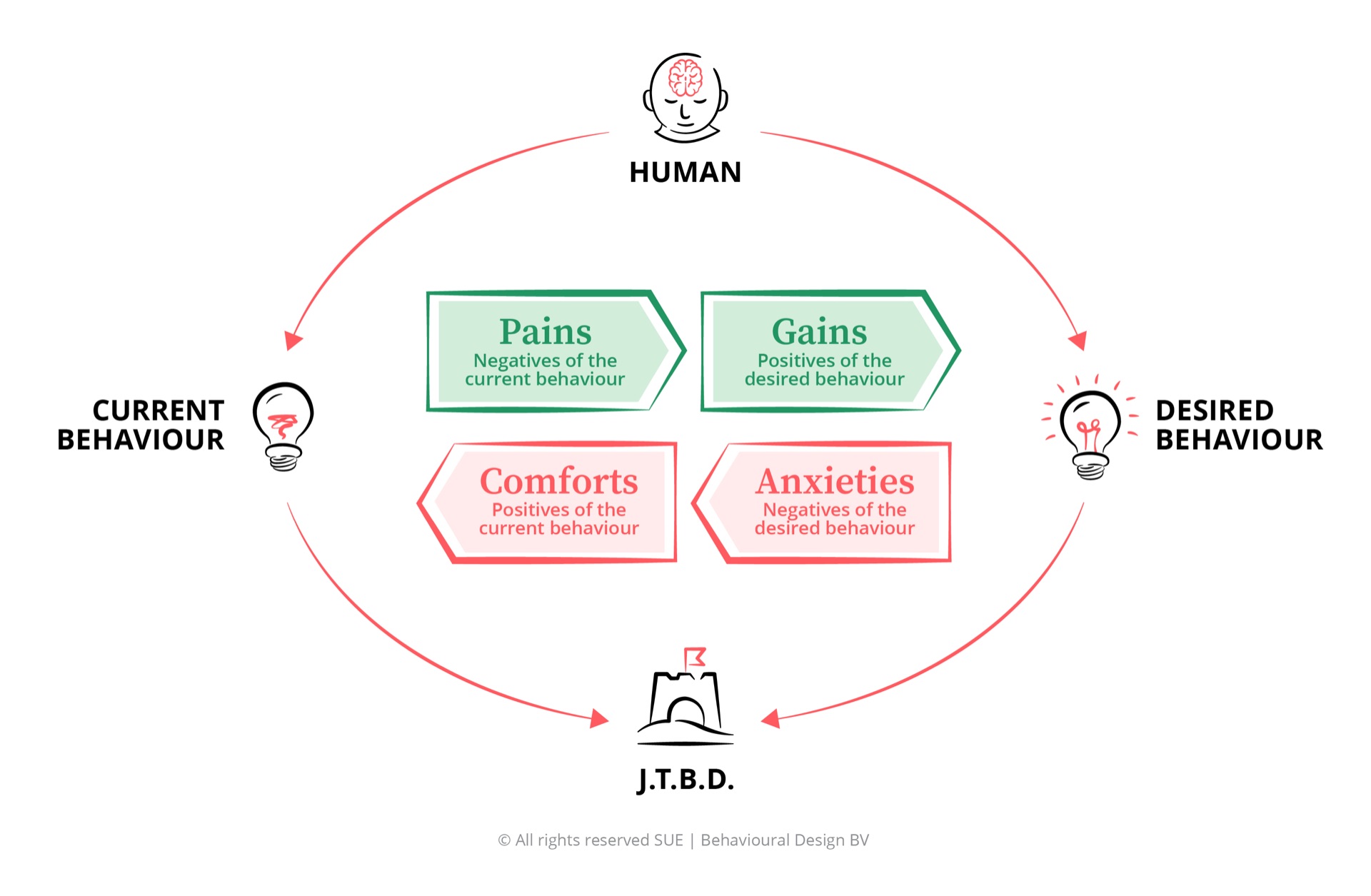

When you analyse hindsight bias at work through the Influence Framework, something striking emerges: the restraining forces are far stronger than the driving forces.

Pains (what pushes you away from current behaviour): organisations repeat mistakes because they fail to learn from previous failures. Strategy reviews miss the real causes. Post-mortems become rituals without insight. These are real costs. But they remain invisible, precisely because hindsight bias creates the feeling that you already understood the situation.

Gains (what draws you towards new behaviour): better evaluations, more honest learning, sharper strategies. Measurable and valuable. But abstract and difficult to feel directly.

Comforts (what keeps you in current behaviour): and here it gets difficult. Hindsight bias feels good. It gives you the sense that you understand the world. That your pattern recognition works. That you are a good judge. This cognitive comfort is one of the reasons the bias is so persistent. Feeling certainty after the fact costs your brain far less energy than living with uncertainty about a complex world.[4]

Anxieties (what holds you back from changing): if you accept that you did not predict the outcome, you must also accept that you cannot predict the future. That is a confronting thought for anyone, but especially for people in leadership positions. They are expected to see things coming. Admitting you did not see it coming feels like a confession of incompetence.

The comfort of retrospective certainty and the fear of incompetence together are powerful enough to override any rational argument about “better learning”. You cannot think your way out of it. The bias operates below conscious thought.

Five interventions that redesign your environment

Record predictions before outcomes are known

The most powerful intervention against hindsight bias is surprisingly simple: write down what you expect before the outcome is known. Force teams to document their predictions at the start of every strategic project. What results do they expect? What risks do they see? What is their estimate of the probability of success? This creates an irrefutable record against which you can test the retrospective narrative. When half the team says “I saw it coming” after a failure, but their documented prediction stated an 80% chance of success, the illusion dissolves on its own.

Design post-mortems around the process, not the outcome

Standard post-mortems start with the outcome and work backwards. That is an open invitation for hindsight bias. Reverse it. Start with the decision-making process: what information did your team have at the time? What alternatives were considered? What assumptions underpinned the decision? Was the process sound, regardless of the outcome? A decision can have been correct based on the information available, even if the outcome was negative. Process quality and outcome quality are two different things.

Use pre-mortems as standard practice

The pre-mortem flips the entire mechanism. Before a major decision, ask your team: “Imagine it is six months from now and this project has failed. What went wrong?” This gives people permission to name risks without being seen as negative. It shifts the focus from retrospective reconstruction to prospective anticipation. And it creates documentation that later adds nuance to the hindsight narrative.

Normalise uncertainty in leadership language

As long as the culture in an organisation expects leaders to “have seen it coming”, hindsight bias will flourish. Leaders who openly say “I did not know” or “the situation was unclear” give their entire organisation permission to do the same. This is not weakness. It is creating an environment in which honest evaluation is possible. Google discovered in Project Aristotle that psychological safety is the single most important characteristic of high-performing teams. Acknowledging uncertainty is a form of that.

Separate evaluations from outcomes

Stop evaluating people based on outcomes beyond their control. Evaluate them on the quality of their decision-making process. A poker player who makes the right decision based on the information available but loses the hand has not played badly. The same applies to your project manager, your marketing director and your strategy department. If you only evaluate outcomes, you punish good decision-makers for bad luck and reward poor decision-makers for good luck. And hindsight bias ensures everyone confirms after the fact that it was deserved.

How hindsight bias connects to other biases

Confirmation bias and hindsight bias form a toxic loop. Confirmation bias causes you to selectively filter information before a decision. Hindsight bias then rewrites your memory so it appears you saw everything all along. One bias makes you blind. The other convinces you afterwards that you could see.

Optimism bias amplifies the effect. Before the project begins, you overestimate the chance of success. Afterwards, when the project has failed, hindsight bias rewrites your memory so you believe you predicted the failure. You were too optimistic beforehand and too certain afterwards. Two opposing distortions, both equally convincing.

The availability heuristic also plays a role. After a known outcome, the “relevant” signals become easier to retrieve from memory. They feel more vivid and more significant than they were at the time. That makes the retrospective story even more convincing.

And there is a direct link to what the research literature calls outcome bias: the tendency to judge the quality of a decision based on the outcome rather than the process. A risky decision that works out is called “courageous” after the fact. The same decision that turns out badly is called “reckless”. Hindsight bias enables outcome bias by making the past appear more predictable than it was.

Frequently asked questions

What is hindsight bias in simple terms?

Hindsight bias is the tendency to believe, after learning the outcome of an event, that you would have predicted it. It is also known as the “I knew it all along” effect. The term was introduced by psychologist Baruch Fischhoff in 1975.

How does hindsight bias harm decision-making at work?

Hindsight bias prevents organisations from learning from mistakes. When a project fails and everyone says “I saw that coming”, the failure is dismissed as predictable rather than investigated. The real causes remain hidden, the same mistakes are repeated, and decision-makers are unfairly punished.

What is the difference between hindsight bias and confirmation bias?

Confirmation bias filters information before a decision: you seek evidence that confirms what you already believe. Hindsight bias distorts your memory after an outcome: you believe in retrospect that you saw the result coming. Together they form a toxic loop: confirmation bias causes you to miss relevant signals, and hindsight bias convinces you afterwards that you saw them.

Can you prevent hindsight bias?

Not entirely. It is an automatic System 1 process. But you can limit the damage by redesigning your decision-making environment. Record predictions and decision criteria in advance, use structured post-mortems that focus on the process rather than the outcome, and normalise acknowledging uncertainty rather than claiming certainty after the fact.

What is a real-world example of hindsight bias?

After Nokia’s decline, virtually everyone in the tech world said: “You could see that coming.” But in 2007, when the iPhone was launched, Nokia was the most valuable telecommunications company in the world with 50% market share. Almost no analyst predicted their fall. Hindsight bias rewrites history so the past appears more predictable than it actually was.

Conclusion

Want to learn how to structurally improve decision-making in your organisation? In the Behavioural Design Fundamentals Course, you learn to apply the Influence Framework and the SWAC Tool to diagnose and overcome cognitive biases. Rated 9.7 by 10,000+ professionals from 45 countries.

PS

At SUE, our mission is to use the superpower of behavioural psychology to help people make better choices. Hindsight bias is perhaps the most underestimated enemy of organisational learning. Not because it causes mistakes, but because it prevents you from learning from them. It gives you a reassuring sense of understanding that is based on nothing. The next time you find yourself thinking “I knew it” during an evaluation, ask yourself the honest question: if I really knew, why did I not act on it?