You're in a meeting. Someone pitches a new idea. Within ten seconds you already know what you think of it. You haven't heard a single fact yet, seen a single number. Yet your brain has already formed a judgement.

That's not a weakness. That's how you survive.

But it's also exactly why so many decisions, persuasion attempts and communication campaigns fail. They assume people think before they react. While the brain that Daniel Kahneman describes in Thinking Fast and Slow works completely differently.

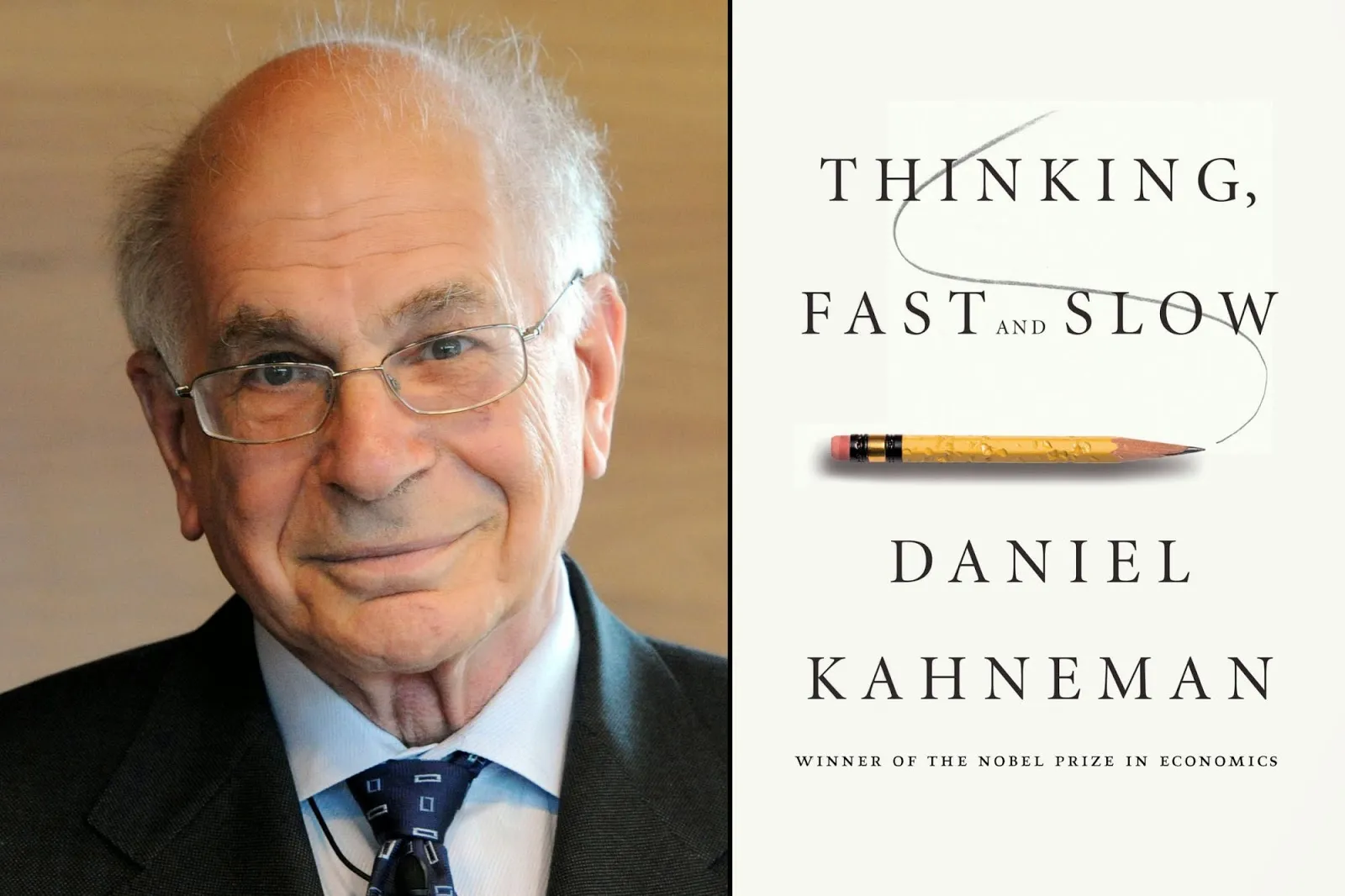

Who is Daniel Kahneman?

Daniel Kahneman is an Israeli-American psychologist who won the Nobel Prize in Economics in 2002. As a psychologist. That is in itself an ironic proof of his central thesis: the world works differently from how we think.

Together with his research partner Amos Tversky, Kahneman spent his entire career demonstrating something economists preferred not to hear: people are not rational. They are predictably irrational. And that is the key word. Not randomly irrational, but systematically so. In predictable ways, over and over.

His book Thinking Fast and Slow, published in 2011, is a summary of that lifetime of research. It is one of the best-selling non-fiction books of the past twenty years. And for anyone who wants to understand how behaviour works, it is essential reading.

System 1 and System 2: how our brain thinks

Kahneman describes two ways of thinking. Not two brain hemispheres, no pop neurology. Two processes.

System 1 is fast, automatic and unconscious. It runs constantly, without you noticing. It assesses faces, recognises patterns, senses danger, forms first impressions. It costs no effort. It demands no energy. It just happens.

System 2 is slow, deliberate and analytical. It is the thinking you deploy when filing a tax return, weighing a chess move or considering a complex decision. It costs energy. It is tiring. And it is engaged as rarely as possible.

Kahneman estimates that around 96% of our thinking runs through System 1. Four percent through System 2.

We make around 35,000 decisions every day. If every one required System 2, we would be cognitively exhausted by breakfast. System 1 is therefore not a bug but a feature. A survival mechanism that allows us to navigate the world without stopping at every step.

System 2 is the slave of System 1. It converts suggestions into beliefs.

That is perhaps Kahneman's sharpest insight. System 1 sends a signal to System 2. System 2 then searches for confirmation. Not evidence, not falsification. Confirmation. This is why debating so rarely leads to changing minds. You address System 2, while System 1 has already decided.

Kahneman calls this WYSIATI: What You See Is All There Is. System 1 constructs a coherent story from available information, without realising that information is missing. The more convincing the story, the more certain the feeling. And the more certain the feeling, the less System 2 questions it.

Heuristics: System 1's mental shortcuts

To make 35,000 daily decisions, System 1 uses shortcuts. Kahneman calls them heuristics: informal rules of thumb the brain uses to quickly reach a judgement.

They are clever. And they are dangerous.

The availability heuristic is the most frequently illustrated example. The easier an example comes to mind, the higher you estimate the probability that it actually happens often. After a plane crash on the news, people overestimate the risk of flying. After media coverage of violent crime, people estimate crime rates to be higher than they actually are. Your brain uses availability as a proxy for frequency. That is not always accurate.

Anchoring is another mechanism. The first number you see anchors your judgement. William Poundstone describes in Priceless how Stella Artois spent years as the most expensive beer on the menu to be perceived as a premium product. Not because it was better. Because the anchor was set high enough. People pay for the feeling of value, not for value itself.

The representativeness heuristic causes us to judge something based on how well it matches a prototype. You ask someone whether a modest, tidy man who loves poetry is more likely to be a librarian or a truck driver. Most people say librarian. But there are ten times more truck drivers. System 1 ignores base rates and chooses the most recognisable pattern.

Cognitive biases: when shortcuts produce errors

Heuristics work well enough for everyday life. But in complex, modern contexts, such as recruitment, strategy, customer behaviour and organisational change, they produce systematic errors. Kahneman calls them cognitive biases.

At SUE, we receive questions from HR managers almost daily about improving their recruitment processes. And almost always the same mechanism is at play. Research shows that decisions about candidates are made within the first ten minutes of an interview. Not after asking structured questions. Not after reviewing competency profiles. Within ten minutes.

What happens then: System 1 forms a first impression based on facial expression, voice, clothing, handshake. Similarity to the interviewer plays a large role (the similarity bias). After that, System 2 activates and searches for evidence that confirms the initial impression. This is called confirmation bias. The outcome is fixed before the first substantive answer has been given.

The same dynamic plays out in strategic decision-making. A management team that has been invested in a particular direction for months will become progressively less open to counter-evidence. Not because they are foolish, but because System 1 has constructed a coherent story and System 2 is loyal to that story. This is the sunk cost fallacy in action.

Kahneman's central message is not that people are irrational and need to fix that. His message is that we are predictably irrational. And that is the difference that changes everything.

Why Thinking Fast and Slow is the foundation of behavioural design

If 96% of our behaviour runs through System 1, that has one direct implication for anyone who wants to influence behaviour.

Informing people does not work.

Or at least: informing alone does not work. Information is System 2 fuel. But if people operate through System 1 96% of the time, you reach only 4% of the decision process with information. Think of anti-smoking campaigns. Healthy eating apps. Emails with "awareness training". They appeal to the conscious, rational brain. While the automatic, unconscious brain determines the behaviour.

The only effective strategy: design the environment so that desired behaviour becomes automatic for System 1. Change the context, not the person.

This is exactly the foundation of the SUE Influence Framework. The framework helps you understand the unconscious forces driving behaviour: the pains people want to avoid, the gains they pursue, the comforts blocking change, and the anxieties that paralyse them. Only once you understand those forces can you design interventions that speak to System 1.

Concrete examples of how this works:

- Defaults: in countries where organ donation is the standard (opt-out), donation rates are 80 to 90%. In opt-in countries, that figure is 15 to 30%. The behaviour is the same, the context differs. System 1 takes the easiest path.

- Social norms: hotels that say "most guests in this room reuse their towel" see more reuse than hotels that explain how good it is for the environment. System 1 follows the herd.

- Framing: "5% chance of death" sounds more dangerous than "95% survival rate". The same information, opposite emotional responses. System 1 reacts to the frame, not the content.

This is why behavioural design is so effective, and why awareness campaigns deliver so little. They are two approaches to the same problem, but only one has biology on its side.

Kahneman in practice: what you can do tomorrow

Knowledge of Thinking Fast and Slow is only valuable when you act on it. Here are three concrete applications.

Pre-mortems in decision-making. Before making a strategic decision, ask the team to imagine it has already failed. "It's January 2027. The project has completely collapsed. What went wrong?" By letting System 1 imagine failure, you surface information that confirmation bias would normally suppress. Gary Klein developed this technique and Kahneman describes it as one of the most effective ways to counter groupthink.

Structured interviews. Not to eliminate System 1, but to give System 2 more influence. The same protocol for every candidate, scoring rubrics per competency, multiple evaluators. Not because this switches off your gut feeling, but because it makes it harder for System 1 to drive alone. See also: cognitive biases at work.

Environment design. If you want people to eat more healthily in the canteen, put fruit by the checkout and crisps at the back. If you want employees to save more, make automatic saving the default. If you want team members to give feedback more often, build a weekly check-in into the calendar. Not as an option, but as a default.

Kahneman's work does not give you tricks. It gives you a lens. A way of seeing why people do what they do, even when it seems irrational. And once you have that lens, you see opportunities everywhere to design the context so that good behaviour is not the exception, but the rule.

Frequently asked questions about Thinking Fast and Slow

What is Thinking Fast and Slow about?

Thinking Fast and Slow is a 2011 book by Daniel Kahneman about how people make decisions. Kahneman describes two systems: System 1 (fast, automatic, unconscious) and System 2 (slow, deliberate, analytical). He demonstrates that people are predictably irrational and that most decisions are made through System 1.

What is the difference between System 1 and System 2?

System 1 is fast, automatic and effortless. It processes information unconsciously and uses heuristics. System 2 is slow, deliberate and analytical. It is engaged for complex reasoning but is cognitively costly. Around 96% of our thinking runs through System 1. For a deeper explanation, see System 1 and System 2 explained.

What are cognitive biases?

Cognitive biases are systematic thinking errors that arise because System 1's heuristics do not always lead to correct conclusions. They are predictable and repeatable. Examples include confirmation bias, anchoring bias and the availability heuristic. See our overview of cognitive biases at work.

What are heuristics?

Heuristics are mental shortcuts System 1 uses to make fast decisions without consciously analysing everything. They save cognitive energy but can produce systematic errors. The best-known are the availability heuristic, anchoring and representativeness.

How does Thinking Fast and Slow apply to organisations?

By designing the environment rather than trying to persuade people. Make desired behaviour the easiest choice: use defaults, social norms and nudges that speak to System 1. Informing and training only work as supporting instruments alongside environment-level interventions.

Is there a connection between Kahneman and behavioural economics?

Kahneman is, together with Richard Thaler, considered one of the founders of behavioural economics. His work proved that economic models based on the rational agent do not hold, and laid the foundation for a new discipline that places human behaviour at its centre.

PS

At SUE, we apply Kahneman's insights every day. Not as an academic foundation, but as a working lens on the world. Every time a client asks us why their communication campaign is not working, why their HR policy is backfiring, or why people are not using their beautifully designed app, the answer is almost always the same: you are talking to System 2, while System 1 is making the decision. Kahneman wrote it down. We design the solution.