The 7 psychological traps of financial behaviour

A pension adviser explains precisely why their client should save more. The client nods, understands entirely, and does nothing. An investment analyst sees the market correction coming, knows rationally it is temporary, and sells anyway. A risk committee reviews a credit application and reaches consensus within five minutes - without anyone asking a single question.

These are not isolated incidents. They are symptoms of seven psychological mechanisms that systematically drive financial behaviour. They are not random or unpredictable. They are documented by Kahneman, Thaler and Shiller, confirmed in thousands of studies, and visible in every training programme we run at SUE for financial institutions.[1]

This article goes deeper into the seven forces already introduced on our financial services sector page. For each mechanism: what it is precisely, why it is so persistent in financial contexts, a concrete case, and how you can design around it.

The psychology of financial behaviour describes how cognitive biases and emotional patterns influence financial decisions - from saving and investing to risk policy and boardroom decisions. The seven most influential mechanisms are: present bias, loss aversion, status quo bias, anchoring, herd behaviour, overconfidence and mental accounting. They are predictable, and therefore designable. More on Behavioural Design in financial services →

Why financial behaviour is so much harder than it looks

Financial services is one of the sectors where the gap between knowing and doing is widest. Clients know they should save more, diversify their portfolios, and stop making decisions based on recent market movements. They know - and do it anyway.

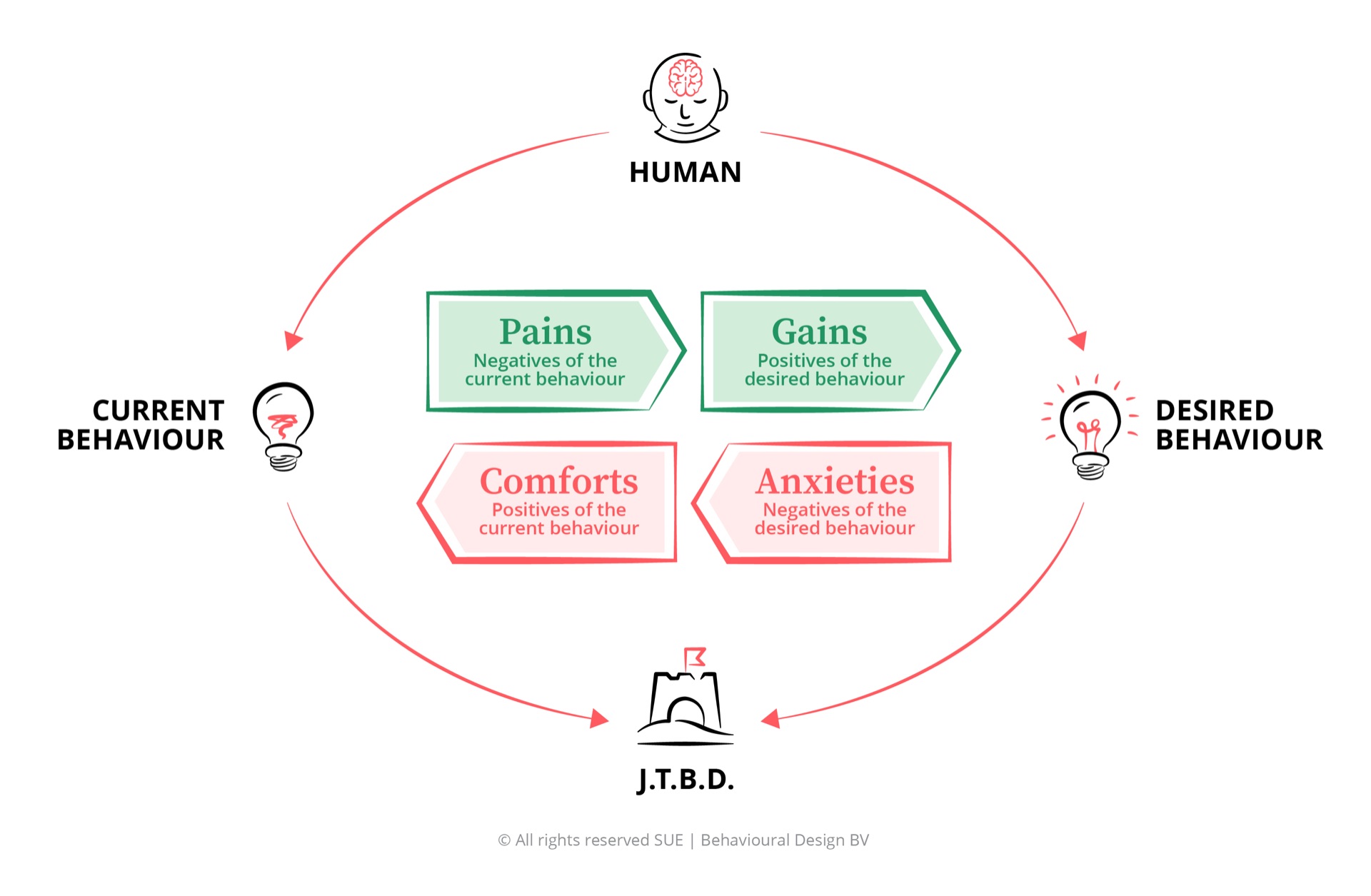

The reason: financial behaviour is predominantly driven by System 1 - the fast, automatic, unconscious part of the brain that handles roughly 96% of all decisions.[2] Financial advice, fund prospectuses and risk reports speak exclusively to System 2 - the rational, analytical side that handles just 4% of decisions. That mismatch is the actual problem.

The seven traps below are all System 1 phenomena. They operate unconsciously, quickly and powerfully. And all of them are designable - if you know how.

Trap 1 - Present bias: the present is always too attractive

Present bias is the brain's tendency to weight immediate rewards far more heavily than future rewards - even when the future reward is objectively larger. Economists call this hyperbolic discounting: the discount people apply to future value is not linear but steep. A reward of £1,500 in two years feels less attractive to most people than £1,000 now, even though the maths clearly favours the future option.

In finance, this is the core mechanism behind the pension savings gap. People consistently report knowing they save too little - research shows close to 80% acknowledge this. But the reward (a comfortable retirement) is thirty years away. System 1 processes that as abstract and distant. The pain of having less now feels concrete and immediate. Every quarter someone decides to start saving later is a rationally understandable but psychologically inevitable outcome of this mechanism.

"Influence is far more judo than karate. You work with the forces already present, and redirect them."

How to design around it. Thaler and Benartzi developed the Save More Tomorrow programme (SMarT): employees commit now to higher savings contributions that only kick in at their next pay rise.[3] The pain of having less never arrives, because the increase goes straight to savings. ING Netherlands applied comparable logic by adding a single concretisation question to their pension enrolment form: "Imagine what your life looks like when you retire comfortably." One question. The result: 20% more enrolments. Present bias weakens when the future feels like now.

Trap 2 - Loss aversion: losses weigh twice as heavy

Kahneman and Tversky demonstrated in 1979 that people experience losses as psychologically twice as painful as equivalent gains feel pleasant.[1] This sounds abstract. The implications are concrete and measurable in every investment portfolio.

During a market correction, a significant proportion of investors sell at precisely the lowest point. Not because they are irrational, but because the pain of falling prices - live and visible in the app - overrides the long-term calculation. The availability heuristic amplifies this: recent loss stories are hyper-present in the mind and make estimated risk feel larger than it statistically is. Every news report about market falls increases the mental availability of loss - making the decision to sell feel psychologically logical.

Loss aversion also operates on the product design side. Research consistently shows that "what does it cost you if you don't do this?" is a far more powerful activation than "what will this earn you?". An adviser who says "at your current trajectory you will have a shortfall of £350 per month at retirement" is activating loss aversion - a force twice as strong as the pull of gain.

How to design around it. Loss frames are not manipulation when they are accurate. The task is to deploy them ethically: activating loss aversion for behaviour that is genuinely in the client's interest. Concrete shortfall insights in pension overviews, scenario visualisations showing what goes wrong with inaction, and coaching that makes the pain of the current path discussable - these are all applications that work with the brain rather than against it.

Trap 3 - Status quo bias: the familiar always beats the better

Samuelson and Zeckhauser described in 1988 how people systematically prefer existing situations over change, even when the alternative option is objectively better.[4] The effort of switching feels larger than the benefit - even when the benefit is substantial.

In financial services this is the mechanism behind client inertia. On average, consumers stay with the same bank for more than ten years and the same insurer for more than fifteen, regardless of competing offers. Not because their current provider is better, but because the effort of switching - filling in forms, arranging transfers, learning new apps - feels larger than the expected benefit. Status quo bias makes the current situation the default choice, regardless of its quality.

The most cited macro-level example is organ donation. In opt-in countries (the default is: not a donor), consent rates average 20-27%. In opt-out countries (the default is: donor), the rate is close to 100%.[5] Same people, same values, same decision. Only the context differs. Whoever designs the default is, in effect, designing the behaviour of most clients.

How to design around it. Automatic enrolment in savings programmes works for the same reason: not persuading, but adjusting the default. With automatic enrolment using opt-out, participation in pension funds rises from below 40% to above 90%. The same principle applies to portfolio rebalancing (automatic), continuing insurance (automatic renewal with transparent opt-out) and savings goals (automatic increases linked to pay rises).

Trap 4 - Anchoring: the first number sets everything

Anchoring is one of the most documented cognitive biases: the first number someone sees anchors all subsequent judgements - even when that first number is arbitrary or irrelevant. Kahneman and Tversky showed that subjects exposed to a random number on a spinning wheel gave consistently anchored estimates on entirely unrelated questions.

In financial services, anchoring is visible every day. In mortgage conversations, the first figure mentioned sets the range within which negotiation takes place. In investment advice, the purchase price of a share colours the selling decision - rather than the current market value or future outlook. In product presentations, a higher price shown first makes all subsequent options appear cheaper than they are.

The Stella Artois principle is the most cited example in marketing psychology: the brand positioned itself for decades as the most expensive beer in the bar, not because it was objectively better, but because the higher price served as an anchor for quality perception. The same dynamic operates in financial products: a fund costing £200 per month looks relatively affordable when placed next to one at £350.

How to design around it. Conscious management of anchoring means both protection and strategy. Protection: in risk committees and advisory conversations, deliberately choose the sequence of information so that the first number does not unconsciously determine the judgement. Strategy: in choice architecture for clients, position the preferred option not as cheapest but as the reference point - so that value perception aligns with the price you want to charge.

Trap 5 - Herd behaviour: social norms as the strongest signal

People are social animals. What others do is, for the brain, the most powerful signal that something is good or right - stronger than statistics, fund prospectuses or risk models. This herd behaviour is evolutionarily logical: in most situations the group is a more reliable guide than individual assessment.

In financial markets, this is the mechanism behind bubbles and crashes. Investors buy during a rising market not because they have calculated fundamental value, but because everyone appears to be buying. The social norm is: this is smart. The availability heuristic amplifies this: stories of people who gained a lot are shared far more widely than loss stories, so the perception of success becomes inflated. The reverse applies in crashes: panic spreads as social information. Everyone is selling, so it must be wise.

This pattern was visible during the crypto bubble of 2021, the dotcom bubble of 2000, and the GameStop episode of 2021 - each a case of herd behaviour overriding fundamental value analysis.

How to design around it. Herd behaviour can be deliberately deployed as social proof for desired behaviour. "Most clients in your situation opt for automatic rebalancing" makes the social norm visible as factual feedback. "8 out of 10 of your colleagues at this organisation have enrolled in the share plan" activates conformity for behaviour that benefits the participant. Social norms are not inherently manipulative - the ethical question is whether the norm you communicate is accurate and in the person's interest.

Trap 6 - Overconfidence: experts underestimate risk the most

Overconfidence bias is the tendency to overestimate one's own competence, knowledge or judgement. What makes this bias particularly striking in financial contexts: it is amplified by expertise, not reduced by it. The more someone knows about a domain, the stronger their sense of being able to assess risk - and the less the tendency to question their own judgement.

In boardrooms this produces a combination of three reinforcing patterns. First: the HIPPO effect (Highest Paid Person's Opinion). The opinion of the most senior person in the room sets the tone of the discussion, after which others unconsciously adjust their position towards that of the leader. Second: optimism bias in forecasts. Business plans submitted for approval are systematically too optimistic - not through bad intentions, but because people consistently overestimate the probability of positive outcomes. Third: confirmation bias in risk assessment - seeking information that confirms the already formed view rather than challenging it.[6]

The effect was visible in the run-up to the 2008 financial crisis, where risk managers at major banks used models built on assumptions that were never systematically challenged. Not because they were incompetent, but because the conviction that "we understand this domain" made questioning those assumptions psychologically uncomfortable.

How to design around it. Pre-mortems are the most underused technique in financial decision-making. Before a major decision is taken - an acquisition, a credit extension, a systems investment - the team imagines it is two years later and the project has failed completely. "What went wrong?" This breaks confirmation bias because System 1 is asked to construct a failure scenario, rather than seek confirmation of the view already formed. Gary Klein developed the technique; Kahneman described it as one of the most effective ways to counter groupthink.

Trap 7 - Mental accounting: money has no label, people do

Mental accounting is the concept for which Richard Thaler received the 2017 Nobel Prize in Economics. People mentally divide money into separate pots with their own rules and different spending thresholds.[7] Holiday pay feels different from salary, even at an identical amount. A tax refund more readily goes towards a luxury purchase than saved money would. A bonus is spent differently from an inheritance, even though both are unexpectedly received funds.

For financial professionals this is directly relevant, because it explains behaviour that is incomprehensible from classical economics. People with consumer credit at 15% interest simultaneously maintain a savings account at 2%. Rationally they should use their savings to repay the credit - saving 13 percentage points per year. They do not, because the savings sit in a different mental pot with different rules. "That savings is for emergencies." The credit is something else.

The same dynamic explains why some people never touch their pension - it sits mentally in a separate pot that feels taboo - while simultaneously running an overdraft on their current account.

How to design around it. Mental accounting can be deliberately used in product design. Savings goals with a name ("school fees 2030", "renovation 2028", "emergency fund") generate higher contributions than a generic savings account, at identical interest rates. Pot-banking - popularised by apps such as Monzo and Starling - is built directly on this insight. By labelling money, the behaviour around it changes. The same principle applies to pensions: a pension dashboard that visualises the shortfall as a concrete monthly amount ("you are missing £340 per month at retirement") activates mental accounting in the right direction.

Frequently asked questions about the psychology of financial behaviour

What is the biggest psychological trap in financial behaviour?

It depends on context, but loss aversion is the most pervasive. Kahneman and Tversky showed that people experience losses as psychologically twice as painful as equivalent gains feel pleasant. This explains panic selling during market corrections, excessive caution in risk policy, and resistance to change in investment portfolios. Loss aversion combines with status quo bias: the familiar feels safe, even when it is suboptimal.

What is the difference between present bias and loss aversion?

Present bias is about the time dimension: the brain over-discounts future rewards relative to immediate gratification. This explains procrastination in pension saving and difficulty with long-term goals. Loss aversion is about pain asymmetry: loss feels heavier than an equivalent gain. Both biases can reinforce each other - the pain of having less now, combined with a vague future reward, makes pension saving doubly difficult.

What is mental accounting and why is it relevant for financial products?

Mental accounting is the phenomenon described by Richard Thaler: people mentally divide money into separate pots with their own rules. Holiday pay, tax refunds and bonuses are spent differently from regular salary, even at identical amounts. For financial professionals this matters because the way you label and present money directly influences how it is spent or saved. A savings product with a clear label (the name of a goal) generates higher contributions than a generic savings account.

How can financial professionals work with status quo bias in clients?

By designing the default rather than persuading. In countries where pension saving is automatic (opt-out), participation is 80-90%. In opt-in countries it is 15-30%. Same clients, same products, different context. If you want clients to display the desired behaviour, make that behaviour the default: automatic rebalancing, automatic saving increases, automatic renewal of good products.

Why do experts underestimate risk more often than non-experts?

Overconfidence bias is amplified by expertise, not reduced by it. The more someone knows about a domain, the stronger their sense of being able to assess risk - and the less the tendency to question their own judgement. In boardrooms this leads to systematic underestimation of uncertainty, combined with the HIPPO effect and optimism bias in forecasts. Pre-mortems, structured dissent and anonymous risk input break this pattern.

1.5 minutes of Influence

Read by 10,000+ professionals · Free · Unsubscribe any time